Jobsite Experiment Asks: How Best to Capture Reality?

LiDAR, 360° videos, point clouds, along with piloted and autonomous drone flights were all put to the test in a first-of-its-kind experiment

The points to be captured at the lab were predefined and then captured using technologies such as this drone-captured point cloud.

Photo by Jeff Yoders/ENR, laser scan courtesy of Oracle/Reconstruct

If contractors knew the best way to capture site data for each kind of job, whether it was using laser scans, drones or their own smartphones, it would certainly solve some problems up front. Last November, the Oracle Industry Lab performed an experiment to get to the bottom of the jobsite reality capture debate.

A simple setup: Should a contractor use stationary or mobile LiDAR, robot-mounted scanners, a structured light 3D scanner, piloted or autonomous drone video and photography, 360° videos or regular smartphone videos to best document their project?

That’s the question personnel from Clayco, Pepper Construction, Oracle and Reconstruct sought to answer.

“We wanted to map out tools versus process versus resource allocation in ways that will be informative in that process,” says Mani Golparvar Fard, chief strategy officer and co-founder of Reconstruct, and also a professor at the University of Illinois at Urbana-Champaign.

A series of markers were placed around the lab space. These printed fiducial markers were placed in the field of view of reality mapping technologies so that their locations in the produced image could be used as points of reference against the site coordinate system. The team surveyed the coordinates of these tags for alignment purposes. A 3D building information model and a 2D PDF drawing also were created in the same site coordinate system so that once the reality map datasets were aligned, they could also be placed into a consistent site coordinate system against the virtual design.

The setup for conducting these experiments varied widely depending on the technology used, but all of them captured some part of the 10,000-sq-ft lab space. LiDAR and structured light depth cameras were affixed to tripods in two of the experiments. Another capture method involved wearable technology that performs LiDAR scans while the wearer walks around. A similar capture was done using a robotic platform with a mounted LiDAR unit to scan the area. Piloted drone flights were carried out by certified pilots from Pepper and Clayco, and the autonomous drones were set up by the pilots.

“To compare these solutions apples to apples, we wanted to generate deliverables that would be exactly the same,” Golparvar Fard says. “We wanted to make sure we preprocess that data so we could visualize it against everything in the system.”

Looking for quick answers on construction and engineering topics?

Try Ask ENR, our new smart AI search tool.

Ask ENR →

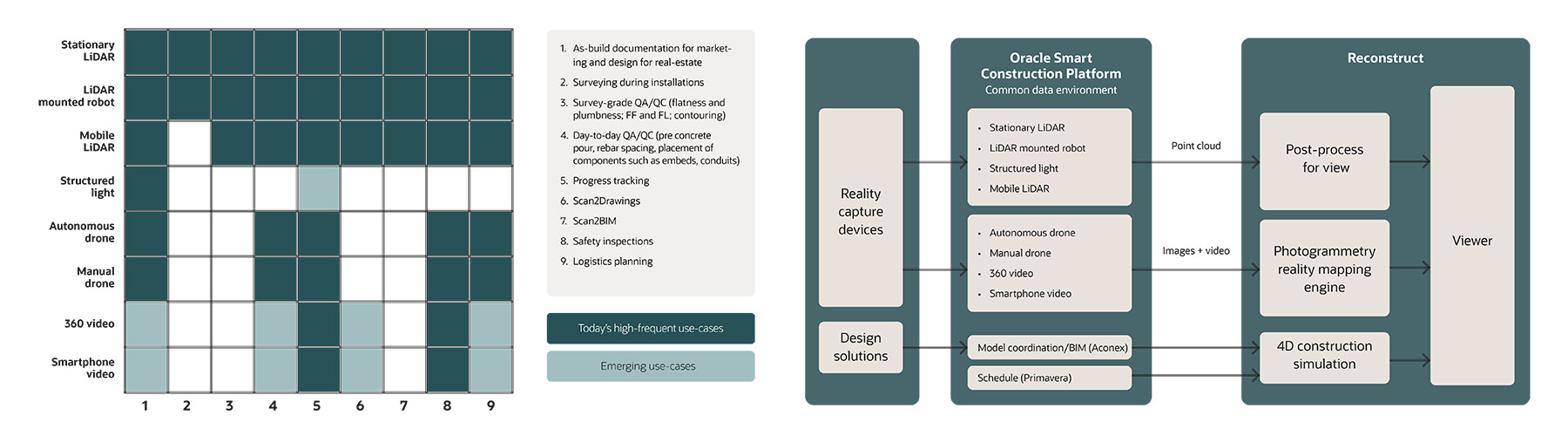

The eight reality capture technologies were measured against nine use cases. The process of data to results was also measured.

Graphs courtesy of Oracle and Reconstruct

*Click the image for greater detail

All reality capture data was brought into Oracle Aconex and Reconstruct either through hardware connections or software apps. The 360° camera connected to the Reconstruct Capture App for smartphones, while E57 files were imported for time-of-flight and structured light devices.

To shield the results from potential errors associated with hardware-specific requirements and to minimize registration errors, a second series of Reconstruct markers were used to align the reality mapping datasets to one another. These tags were automatically detected in each point of data by Reconstruct from the reality map dataset, including scanner data as well as smartphone, 360° and drone images and videos.

Next, the 3D mapped positions of these markers were used to align all of the different reality maps against one another and then transform the results using the first set of surveyed fiducial markers into the site coordinate system. While the processing time to go from capturing data to generating reality maps varied from minutes to a few days, the team spent several weeks analyzing the data, conducting what-if analyses, and incorporating input from the experiment’s participants.

A series of side-by-side evaluations were conducted to explore the relationship between the time and cost it takes to conduct each reality mapping process as well as the time it takes to get results, for each combination of technology, process and team. Results showed the autonomous roving approaches saved time compared to their more fixed counterparts.

“Stationary LiDAR captures everything, but if you look at it attached to a robot, it’s very beneficial because we saw a time savings when you do that autonomously,” says Burcin Kaplanoglu, vice president of innovation at the Oracle Industry Lab. “These two are pretty close to each other. One is a human doing it, the other one is a robot doing it. Right? And the improvement is in this [field time] bucket.”

“What was truly surprising is how the quality of data has significantly improved over the past several years and the amount of useful information that this team managed to produce .”

-Tomislav Zigo, CTO and vice president of Clayco

Quantifying the field time and effort of contractor employees was an important part of the experiment. Lowering full-time employee (FTE) field time was measured as a part of each experiment. The relationship between the cost for both initial and repetitive processes versus time from the start of the capture to results was measured, too.

Accuracy was also seen to improve when using the autonomous options. One surprising result was that simple smartphone video and photography ranked high compared to the more complex photo and video, 3D-mesh and point-cloud options in terms of accuracy. The cameras, whether shot using humans or autonomous options, produced high-quality images and video that were easier to upload and process than other forms of reality capture.

“I wasn’t surprised, but for an audience who just see the phones as a way to take pictures and other common uses, I think they’ll be pleasantly surprised that it’s bringing a huge value,” Kaplanoglu says.

A corner of the lab where mechanical/electrical/plumbing, structural and architectural systems are located in close proximity to each other was used to get a flavor for the kind of complex site conditions that contractors must accurately capture.

The results with smartphone and 360° videos impressed the team and proved to be effective to handle confined spaces. The availability of smartphones also offer a unique opportunity for quick reality mapping of underground utilities, rebar cages with embedments and boxouts, in-wall inspections, and exposed MEP under-ceiling installations, the results showed.

“It was refreshing to be a part of an unbiased evaluation exercise during which all the participants got a chance to objectively evaluate the process and the data outcome in a very transparent fashion,” says Tomislav Zigo, CTO and vice president of Clayco. “What was truly surprising is how the quality of data has significantly improved over the past several years and the amount of useful information that this team managed to produce.”